Avoiding LLM Model Collapse

27 Mar 2026Large Language Models (LLMs, and what is currently being called AI) are incredibly divisive right now. On one side you have those hyping them up as a small step from ushering in the singularity. And on the other side those who think they’re a colossal waste of electricity and water, that all they produce is “slop”, and they’re going to take away everyone’s jobs. As with most things in life, the reality is likely somewhere in the middle.

The issue that I wanted to discuss was the idea of “Model Collapse”, why it’s bad, why it too might be overblown, and ways to avoid it.

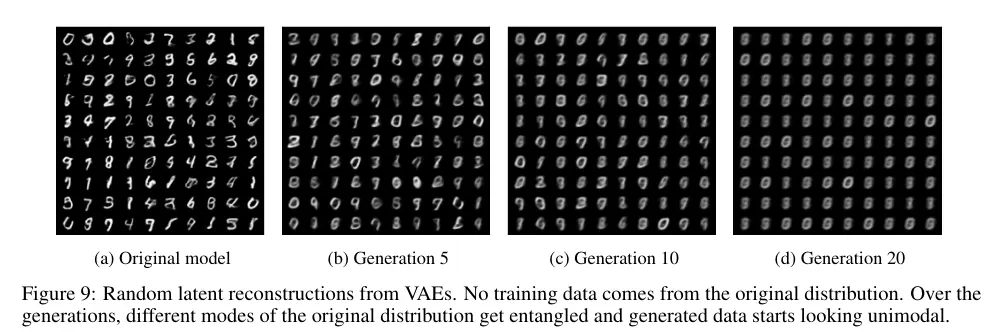

Model Collapse as a term was originally introduced in the paper titled “The Curse of Recursion: Training on Generated Data Makes Models Forget” (arXiv:2305.17493) in May 2023. To put it simply, Model Collapse is when a machine learning model output degrades over generations of training, and specifically pertains to parts (or all) of said training data being generated by AI models rather than being “real”. This has proven to be a problem by researchers, with LLM performance degrading substantially after a handful of generations being trained solely on the output of previous generations. It’s also a known problem in non-text models like Variational Autoencoders (VAE), where repeated training on generated samples causes the outputs to collapse to a few modes. You can see that degeneration much more clearly in images than text.

This isn’t too surprising given that LLMs are basically statistical models with some extra-sauce. You can compare them to the old Markov chain text generators of the past. They built their output based upon a set of rules, derived from training data. With a Markov text generator you could feed it the contents of some books and have it generate semi-coherent text, with a similar style and cadence to the inputs. LLMs make use of many orders of magnitude more inputs and use a few extra layers to achieve much more impressive feats.

So why is Model Collapse a problem? You can just not train on the output from previous generations right? Unfortunately, today’s AI models have such a large amount of parameters that they need to be trained on web-scale datasets. And what has been cropping up on the internet since these models were made widely available? A lot of LLM generated content, and not all of it is of high quality.

So are we doomed to a future of Model Collapse or stagnated Large Language Models like many doomsayers proclaim? Probably not.

Why don’t I think so? Well for one thing, not all online content is “AI slop”, even if there is an increasing amount of it. So the apocalyptic Model Collapse in a short time is probably not going to happen. But studies like ahrefs.com’s from May 2025 which suggests that, from 900,000 web pages, 74% contained “AI-generated content” do raise some concerns of an Ouroboros like effect of AI consuming its own output.

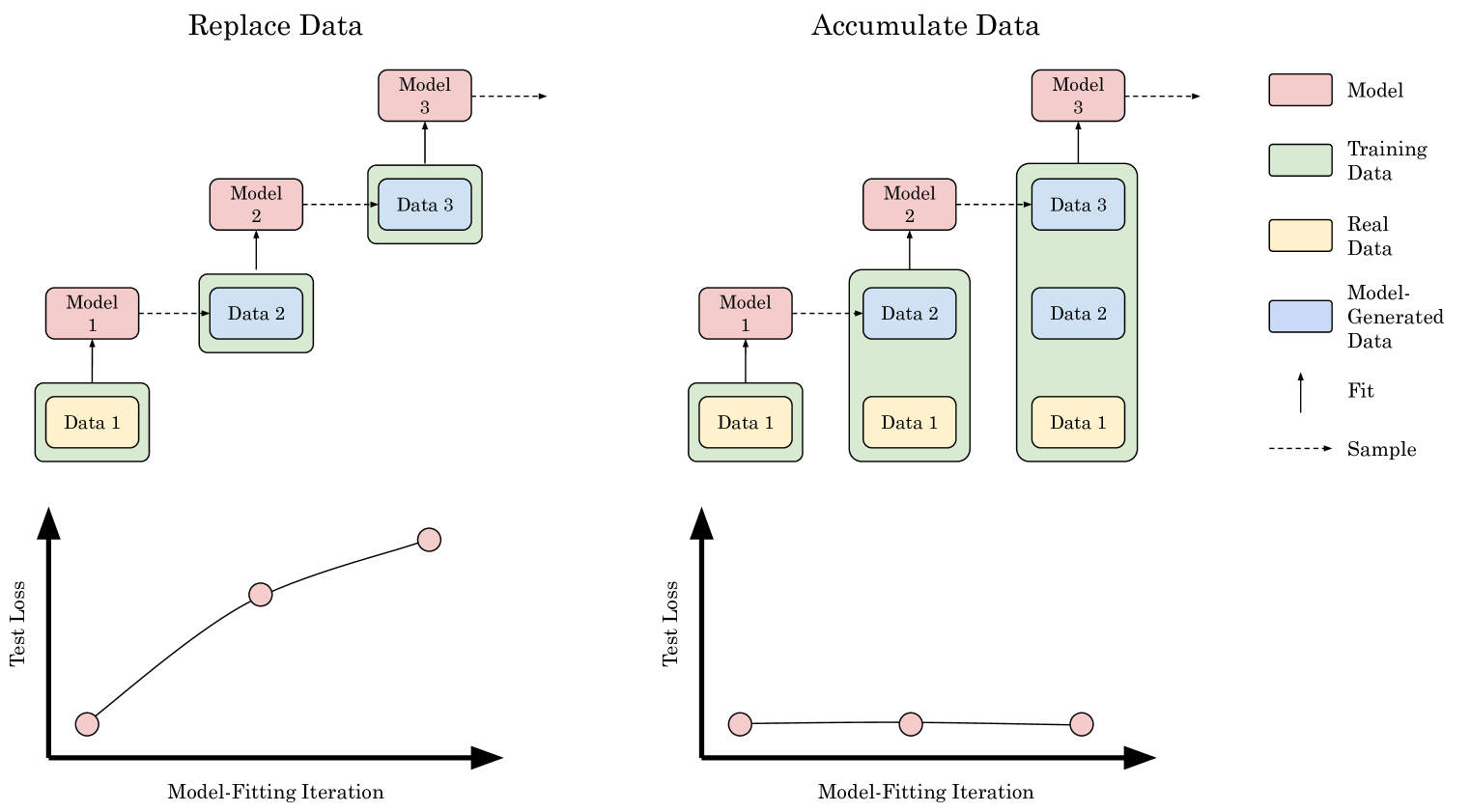

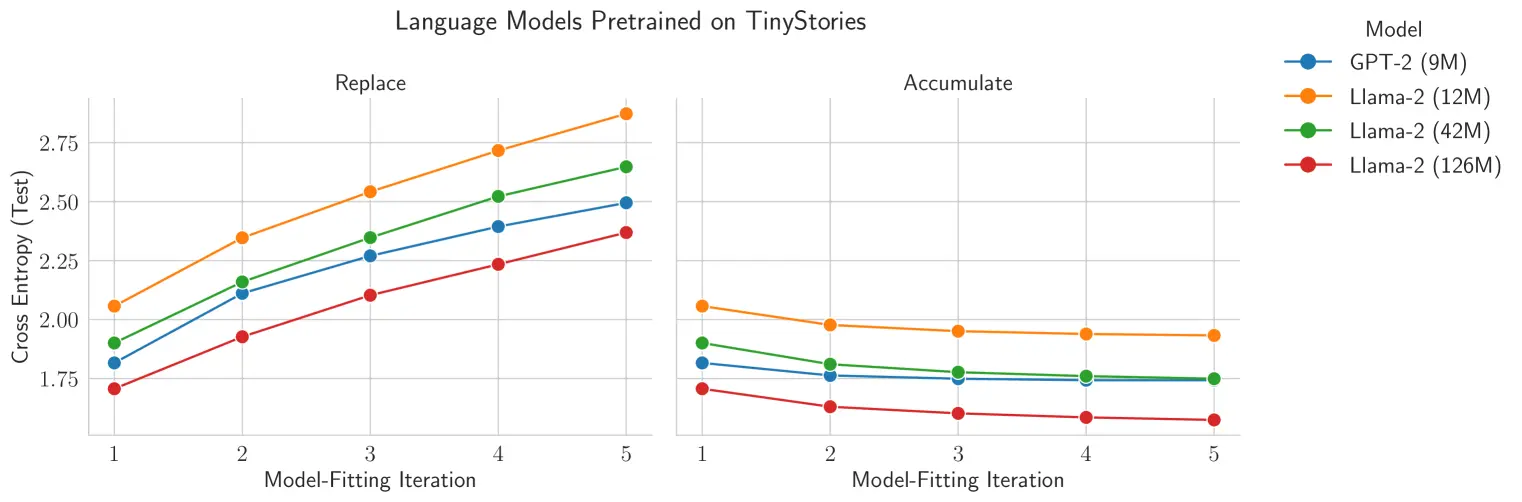

The main reason I think it’s unlikely is the stronger of the two. The training regime used to predict Model Collapse within those few generations was based on “replacing” all the training data for each generation with synthetic data generated by the last generation of model. That isn’t how LLMs are trained generally and isn’t really a sensible way to train further generations of them. Instead, training is done by “accumulating” training data, that is using past training data and new data. The paper “Is Model Collapse Inevitable? Breaking the Curse of Recursion by Accumulating Real and Synthetic Data” (arXiv:2404.01413) shows that accumulating data can avoid Model Collapse in their experiments, both via empirical testing and through mathematical proof.

Fantastic, so Model Collapse is something not to worry about at all then? Unfortunately that isn’t totally true either. As always the answer probably lies in the middle.

Why? Well for one thing the tests done in that paper were done using smaller models, smaller datasets, relatively few generations and mainly focussed on testing the next-token prediction of the LLMs. The results are still applicable to larger LLMs, but may not show the whole picture. What it does show is that “real” data helps to anchor each generation and essentially avoids the issue of a game of Telephone between generations causing Model Collapse in a short span of generations. But there remains no certainty that other issues won’t arise, and even slow degradation could happen. Although this is more of a concern extrapolated from current experiments rather than something empirically observed.

This potential for a slower, long-term form of Model Collapse is especially relevant if you cap the size of training data so it doesn’t grow constantly with every generation. And these limits are something that we will realistically have to do as we cannot feed ever increasing amounts of data into LLM training due to physical and temporal constraints.

The paper also doesn’t really address human-AI feedback loops when it comes to accumulating both “real” and synthetic data as it is produced at scale. Put another way, reality is a moving target in terms of “real” and synthetic data, and both interact with each other in unpredictable ways. For example; what happens when a model is trained on “real” data (say, code) that a human wrote after copying patterns from the previous generation’s suggestions, even though the text itself wasn’t directly generated by the model? And finally Model Collapse can’t necessarily just be quantified by next-token prediction.

What that paper and many others clearly shows is that training data quality is important, especially the initial data. This isn’t really a surprise. In the data and wider programming space it’s almost always the case that the more effort you spend cleaning and preparing your data, the better output you get. We call this garbage in, garbage out.

The fact that initial training data is important actually mirrors other issues with using AI models. Like how wording questions differently can have a large effect on outputs, and including presuppositions e.g. “I think the answer is X” or “Why is this unlikely?” can cause LLMs to agree even when sources or training data disagrees. Anyone who has used AI to debug production issues can tell you that you can spend a long time following AI suggestions that are simply irrelevant, trying fixes that are based upon hallucinations, or just going in circles.

So what can be done to avoid the slower Model Collapse? Quite a lot actually.

For one thing we can improve upon the training data used generally by these models. Filtering out clearly synthetic data can reduce the ratio of it to “real” data, but using quality “real” data will have a clear positive effect. That is to say, you can’t just dump unfiltered data from the internet into your training data and expect good results, especially once that data is saturated with low-quality synthetic text.

More importantly, we can specialise Large Language Models, which is already being actively done. This is done by filtering training data to bias towards the more specialised tasks we want the model to achieve, and adjusting the post-training towards solving tasks in that more specific area. Essentially, you can use one of the larger models as a base and tailor it more towards a specific area. Provided it is used correctly, a specialised model will very likely outperform a more generalised one. This is why I think LLMs are useful tools.

Speaking of tools, giving LLMs (especially specialised ones) access to tools can greatly help reduce errors in their outputs by biasing them to use the tool output over their own. That can reduce the classic “how many Rs are there in strawberry?” mistakes models make. Although LLM tool use is a topic for another article.

Overall, I see a potential long-term future of AI models not collapsing under the weight of generated “slop”, but it requires both users and model publishers to be careful in how they use the data from AI models. In my opinion, AI is here to stay, and LLMs are useful tools, but they’re not all they’re hyped up to be. I base this opinion on my own anecdotal use of AI, anecdotes of colleagues, reading up on how they work, news/media reports, and looking into research around their uses and potential issues.

Comments